A New Set Of Chips Are Coming

The accolades keep raining down on Nvidia (NVDA) CEO Jensen Huang. I even heard one person say he is the new Steve Jobs.

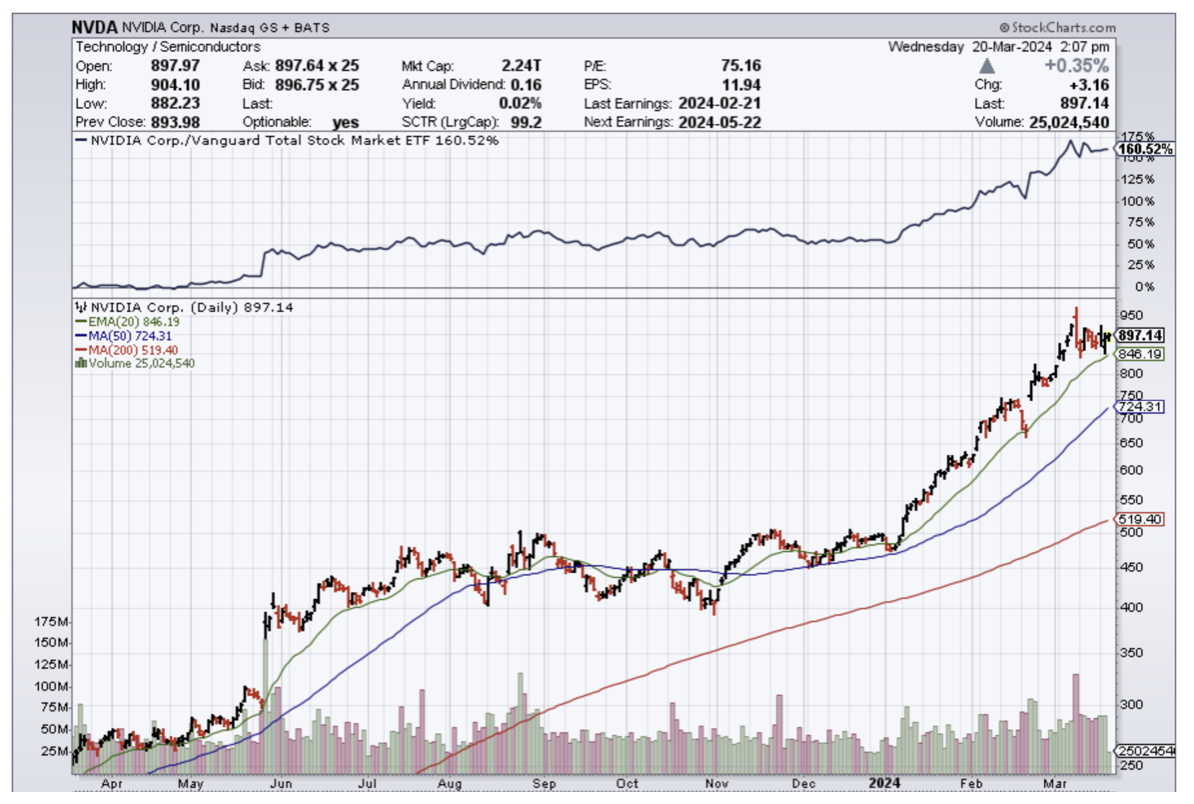

Those are quite lofty compliments for a guy who has been under the radar for quite a long time. However, he can’t hide anymore as NVDA’s share price has skyrocketed and the valuation today stands at over $2.2 trillion.

NVDA should be at the heart and core of every tech portfolio. They are critical to the facilitation of AI in the present and the future. So when he talks publicly, people listen and that’s what just happened.

Jensen Huang described what he sees ahead for artificial intelligence and Nvidia. He believes it is something so vast and transformative that computing and how we use it will never be the same.

Huang gave the keynote address on Monday to open Nvidia's GTC 2024 conference. Huang focused on what he insisted was the coming transformative influence that his company's Blackwell program of chips and related systems would have on technology and artificial intelligence at the first level and the entire economy beyond.

The audience at the SAP Center in San Jose was waiting for his every word.

Huang focused on Nvidia's new generation of chips and the two factors that make AI work: the training (or programming) that enables the semiconductors to receive data, recognize and organize it, and send it back out to a client in usable form; and the brute computing power to make it all happen.

Nvidia's influence on artificial intelligence is already substantial, thanks to its H100 GPU chips and related products which power most AI applications now.

The Blackwell platform, expected to be available toward the end of 2024, will use a new series of chips, the B200 family, combined with new components and software to get the most out of the chips.

The goal is to let a user pack more training onto chips so these chips and the components built around them can recognize data more quickly.

The chips are supposed to access more inference — the capacity to know how to analyze the data to produce usable conclusions to queries and questions.

Blackwell is supposed to offer 4 times the capacity of Nvidia's wildly popular A100 chip to program the training aspects in the chips themselves and 30 times the inference output.

Add more of these chips into the system, and you can gather more data and translate it into more usable information almost instantaneously.

Nvidia is developing other equally fast components into the platform system so that the information flows in and out swiftly and, as importantly, smoothly, all the while using a lot less power.

Many can see how these cut across a slew of industries by making them more productive and efficient. The head and brains of an operation for most corporations will be an algorithm facilitated by an Nvidia chip.

The demand for these products will be out of the roof coming from industries like logistics, industrial, transport, consumer products, finance, and so on.

Nvidia will supercharge business everywhere.

I will keep tabs on how the Blackwell platform performs, but it is hard to envision it failing because Nvidia’s reputation precedes itself.

This also could trigger another leg to the bull market in tech stocks.