Tesla's vision for the future of transportation extends far beyond electric vehicles. Elon Musk has long touted the development of a fully autonomous Robotaxi service, a concept that could revolutionize ride-hailing and personal transportation as we know it. While the road to realizing this ambitious goal has been paved with delays and technological hurdles, recent developments suggest that Tesla's Robotaxi aspirations are gaining momentum.

This article delves into the intricacies of Tesla's Robotaxi program, exploring its technology, potential impact, challenges, and the broader context of the autonomous vehicle landscape.

The Vision: A Fleet of Self-Driving Taxis

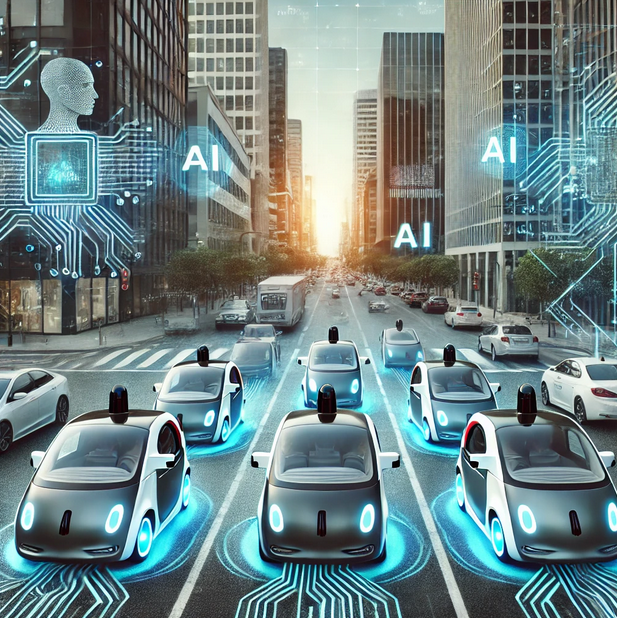

Imagine hailing a ride and having a driverless vehicle arrive at your doorstep, ready to whisk you away to your destination. This is the essence of Tesla's Robotaxi vision. The company aims to deploy a fleet of fully autonomous electric vehicles that can operate without human intervention, providing on-demand transportation services to passengers.

This concept is not entirely new. Companies like Waymo and Cruise have been testing and deploying Robotaxi services in select cities with varying degrees of success. However, Tesla's approach differs in its ambition and integration with its existing electric vehicle ecosystem.

The Technology: Full Self-Driving and Beyond

At the heart of Tesla's Robotaxi program lies its Full Self-Driving (FSD) technology. FSD is a suite of advanced driver-assistance systems that enables Tesla vehicles to navigate roads, respond to traffic signals, and perform complex maneuvers with minimal human input. While FSD is still under development and requires driver supervision, it represents a crucial step towards achieving full autonomy.

Tesla's Robotaxi fleet will likely leverage an even more advanced version of FSD, potentially incorporating cutting-edge AI, sensor technology, and real-time data processing capabilities. The company has hinted at the development of a dedicated "Robotaxi" model, possibly based on the Cybertruck platform, that would be optimized for autonomous operation and passenger comfort.

Potential Impact: Reshaping Transportation and Beyond

The successful deployment of Tesla's Robotaxi service could have far-reaching implications for the transportation industry and society at large. Here are some potential impacts:

- Revolutionizing Ride-Hailing: Robotaxis could disrupt the ride-hailing industry by offering cheaper, more efficient, and potentially safer rides compared to traditional human-driven services.

- Reducing Traffic Congestion: Autonomous vehicles are expected to optimize traffic flow and reduce congestion by communicating with each other and infrastructure, leading to smoother and more efficient commutes.

- Increasing Accessibility: Robotaxis could provide affordable and convenient transportation options for individuals who cannot drive, such as the elderly or people with disabilities.

- Transforming Urban Planning: The widespread adoption of Robotaxi could influence urban planning and development, potentially reducing the need for parking spaces and promoting more pedestrian-friendly environments.

- Creating New Economic Opportunities: The Robotaxi industry could generate new jobs in areas such as software development, maintenance, and fleet management.

Challenges and Concerns: Navigating the Road to Autonomy

While the potential benefits of Tesla's Robotaxi program are significant, several challenges and concerns need to be addressed before widespread adoption can occur:

- Technological Hurdles: Achieving full autonomy is a complex technological challenge that requires overcoming obstacles such as unpredictable human behavior, adverse weather conditions, and ensuring the safety and reliability of the system.

- Regulatory Uncertainty: The regulatory landscape for autonomous vehicles is still evolving, and clear guidelines and standards need to be established to ensure public safety and address liability issues.

- Public Acceptance: Overcoming public apprehension and building trust in autonomous technology is crucial for the success of Robotaxi services.

- Ethical Considerations: Questions surrounding job displacement, data privacy, and the ethical implications of AI decision-making need to be carefully considered and addressed.

- Cybersecurity Risks: Ensuring the cybersecurity of autonomous vehicles is paramount to prevent hacking and malicious attacks that could compromise safety and functionality.

The Road Ahead: A Gradual Rollout and Continuous Development

Tesla's Robotaxi program is still in its early stages, and a full-scale rollout is not expected in the immediate future. The company is likely to adopt a gradual approach, starting with limited deployments in controlled environments and progressively expanding its service area as technology matures and regulations evolve.

Continuous development and refinement of FSD technology will be crucial for the success of Tesla's Robotaxi ambitions. The company will need to address the challenges and concerns outlined above while ensuring the safety, reliability, and affordability of its service.

Conclusion: A Transformative Vision with a Long Road Ahead

Tesla's Robotaxi program represents a bold vision for the future of transportation, one that could reshape our cities, redefine personal mobility, and create new economic opportunities. While the road to full autonomy is paved with challenges, Tesla's relentless pursuit of innovation and its growing expertise in AI and electric vehicle technology position it as a key player in the race to deploy Robotaxi services.

As technology advances and regulations evolve, the prospect of hailing a driverless Tesla taxi may become a reality sooner than we think. The journey towards this transformative vision promises to be exciting, challenging, and ultimately, a defining moment in the evolution of transportation.